Beyond the Checklist: Human-Centric Governance as the New License to Lead

-

Arif Uz Zaman Badhon

- Published Date:

Read Time: 10 mins

Share This

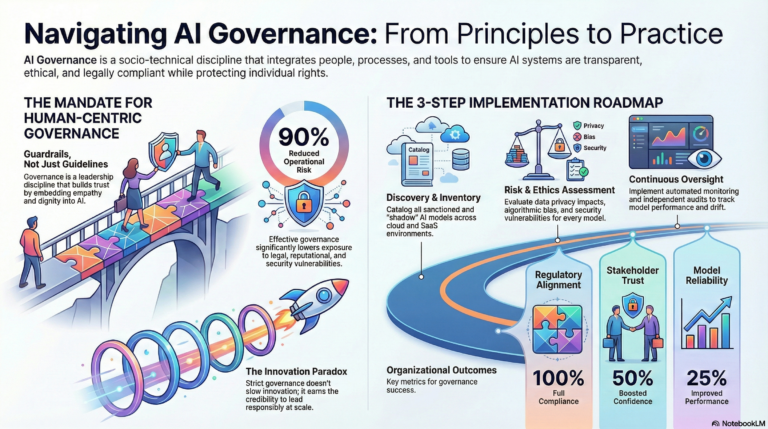

The current acceleration of Artificial Intelligence adoption represents more than a mere technological trend; it is a tectonic shift in our socio-technical landscape. As algorithms migrate from back-office automation to the sovereign core of decision-making in healthcare, finance, and public infrastructure, many organizations are rushing into the frontier without a moral or operational compass. This frantic pace has birthed a dangerous Trust Paradox: AI systems are becoming exponentially more capable, yet the “principled” nature of these systems is often treated as an afterthought, leading to an erosion of the digital social contract between enterprises and the public.

To bridge this governance gap, leadership must recognize that oversight is not a bureaucratic drag on velocity—it is the strategic nucleus of sustainable innovation. We are moving past the era where a simple “compliance checklist” suffices. In this new paradigm, governance is the ontological foundation that ensures AI remains reliable, safe, and aligned with human values. It is, quite literally, the license to lead in an increasingly skeptical world.

The central thesis for the modern executive is clear: Governance is not a barrier to innovation; it is the essential guardrail that allows you to drive faster and more confidently. By shifting from a “technical-fix” mindset to a holistic, socio-technical approach, organizations can transform AI from a source of systemic risk into a profound tool for augmented humanity.

The Accountability Trap: Moving Beyond “No One” and “Everyone”

A recurring pathology in organizational AI strategy is the accountability vacuum. When interrogated about who bears responsibility for algorithmic outcomes, many entities default to three “bad” responses: the overtly terrible “no one,” the ostrich-like “we don’t use AI” (ignoring the shadow AI used by employees), or the most insidious: “everyone.” As the IBM leadership frameworks suggest, when accountability is diffused across an entire organization without a clear locus of control, it effectively vanishes. This diffusion creates a “socio-technical gap” where technical teams view ethics as a leadership problem, while leadership views it as a technical bug to be patched.

Escaping this trap requires more than just a policy update; it demands a dedicated leader with a funded mandate—a Chief AI Ethics Officer or similar role with a permanent seat at the table. This leader must bridge value alignment with technical execution, moving beyond the “lawful but awful” threshold where systems may meet the letter of the law while violating the spirit of human dignity. True accountability means being a “darn good teacher,” operationalizing principles like fairness across a multidisciplinary team that includes the CISO, Data scientists, and legal counsel.

“There’s also a recognition that you can have AI models be lawful but awful, which means their purview, their responsibility actually has the push into ethics.”

The Innovation Paradox: Why Guardrails Actually Make You Faster

The prevailing corporate myth suggests that governance is a brake on progress. In reality, the opposite is true: robust guardrails are what allow for high-stakes deployment. This is the Innovation Paradox. In complex environments like fintech or banking, governance frameworks such as Gartner’s AI TRiSM (Trust, Risk, and Security Management) provide the foundation that makes automation trustworthy. For example, when a banking system uses AI for predictive maintenance on core payment rails, it is the governed oversight—not the algorithm alone—that prevents catastrophic outages during peak transaction days.

Treating governance as a leadership discipline, rather than a regulatory hoop, provides the traceability needed to scale. As global frameworks like the EU AI Act’s risk-based approach and India’s DPDPA begin to codify these requirements, organizations that have already embedded transparency and human oversight into their design phase will possess a massive competitive edge. They are not jumping through hoops; they are building the infrastructure of public safety.

“AI Governance doesn’t slow innovation, it gives it the credibility to lead responsibly.”

Cracking the Black Box: Why “Explainability” is the New Privacy

In our current era, the demand for “Explainable AI” (XAI) has transcended technical preference to become a fundamental human right. As we move away from opaque “Black Box” models toward interpretable “White Box” architectures—like linear regression or decision trees—we are acknowledging that a decision must be justifiable not only statistically but ethically. In high-stakes sectors like healthcare or BFSI (Banking, Financial Services, and Insurance), offering a human-understandable rationale is no longer optional; it is a prerequisite for agency and control.

Explainability is the “New Privacy” because it protects the individual’s right to understand the logic that governs their life. If an AI rejects a loan application or a medical treatment, the logic must be visualized and documented. Without this, even a legally compliant model remains “awful” because it lacks the transparency necessary for human trust. True governance ensures that explainability is not a post-hoc feature, but an intrinsic design requirement.

“Explainability is not a feature; it’s a right.”

Humanizing the Guardrails: Protecting Dignity at the Data Layer

Human-centric governance requires us to protect people, not just data points. The seeds of algorithmic bias are almost always sown at the ingestion layer. When recruitment systems are trained on legacy hiring data, they don’t just process information; they reinforce historical patterns of exclusion. To humanize AI, we must treat “cleaning data” as a moral act of protecting fairness and integrity at the source.

To operationalize this, organizations must adopt a rigorous “5-Step AI Governance” approach, focusing on specific strategic mandates:

• AI Model Discovery: Maintaining a formal, real-time inventory of all AI models, including “Shadow AI.”

• Risk Assessment: Categorizing models based on their potential impact on safety, privacy, and fairness.

• Controlling Inputs and Outputs: Validating data provenance and ensuring diversity in datasets to prevent unlawful scraping and bias.

• Data Minimization vs. Utility: Striking the balance between the need for diverse data and the ethical requirement to limit data collection to specific, consented purposes.

Securing the Frontier: Navigating Hallucinations and Model Tricking

The technical vulnerabilities of modern AI—Hallucinations, Jailbreaking, and Model Tricking (Adversarial Machine Learning)—are not merely IT bugs; they are governance failures that create profound security blind spots. Traditional security frameworks are often unequipped for the dynamic nature of LLMs, where an attacker can use subtle perturbations to cause intentionally incorrect predictions. To defend this frontier, we must move toward frameworks like MITRE ATLAS (Adversarial Threat Landscape for Artificial-Intelligence Systems) to map and mitigate specific AI threats.

Securing these systems requires the following Strategic Mandates:

• Adversarial Training: Proactively exposing models to attack scenarios during the training phase to increase robustness.

• Federated Learning: Utilizing decentralized training to enhance privacy and reduce the risk of centralized data breaches.

• Continuous Monitoring & Drift Detection: Implementing real-time tracking of model performance to detect when an AI begins to deviate from its intended logic.

• Human-in-the-Loop: Ensuring mandatory human intervention for any high-stakes decision-making process.

Conclusion: Toward “Augmented Humanity”

The future of the global economy belongs to those who govern with the deepest integrity. As we pivot from the concept of “Artificial Intelligence” to “Augmented Humanity,” the goal of governance becomes clear: to enhance human judgment, not replace it. To reach this future, organizations should follow a disciplined roadmap:

1. Assessment: Audit current practices and establish a baseline.

2. Policy Development: Formalize internal ethics and governance guidelines.

3. Setup & Tools: Implement the technological infrastructure (like AI-SPM) to monitor posture.

4. Training & Adoption: Foster a culture of AI literacy across all multidisciplinary teams.

5. Continuous Improvement: Regularly adapt the framework as the regulatory and technical landscape evolves.

As you look at your own organization’s AI initiatives, you must ask: Are your systems merely “lawful but awful”—meeting the bare minimum of regulation while risking the trust of your stakeholders? Or are you investing in the funded mandate and ethical scaffolding required to earn the true license to lead? The answer will define your legacy in the age of AI.

Image Source: Created by NotebookLLM